Share

When I joined Autodesk in 2019, the Autodesk AI Lab had only one paper published at a top-tier AI conference. As of today, I’m proud to say we’ve had almost 50, including 21 this year alone.

Formed in 2018 as a part of Autodesk Research, the Autodesk AI Lab conducts fundamental and applied research in AI and machine learning with an aim to unlock a new era of AI-powered design tools for our customers. We’re committed to growing our reputation in the AI research community by expanding our teams, partnering with notable organizations, and publishing cutting-edge AI research.

Earlier this year, the Autodesk AI Lab presented five papers on the application of deep learning to computer-aided design at the Computer Vision and Pattern Recognition Conference (CVPR 2022), one of the premier conferences in computer vision and machine learning.

The work we showcased was completed in cooperation with esteemed academic institutions, including Stanford University, Massachusetts Institute of Technology (MIT), Simon Fraser University and Korea Advanced Institute of Science & Technology (KAIST). Our papers focus on making designers’ jobs easier by using AI to reverse-engineer objects and assemblies into CAD models, as well as generate the designs themselves. Below is a summary of each paper accepted.

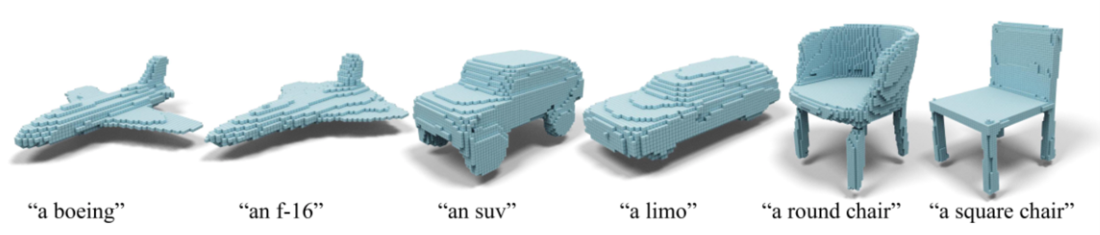

CLIP-Forge: Towards Zero-Shot Text-to-Shape Generation

CLIP-Forge allows users to describe 3D objects via spoken words, and the system then generates 3D voxelized (Minecraft-style) models of those objects. This is an early step in using words for 3D geometry generation, which can help users build entire 3D scenes for games, movies, and more.

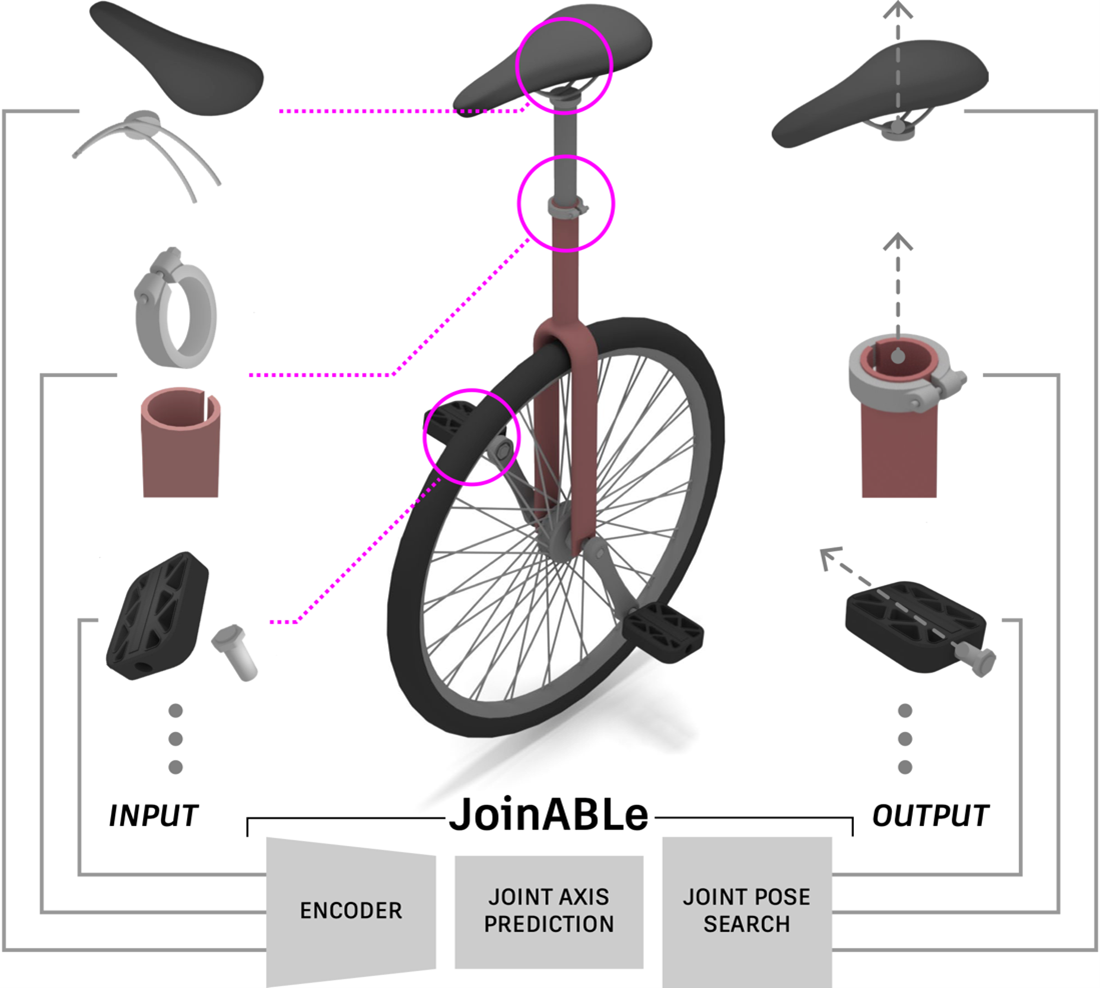

JoinABLe: Learning Bottom-up Assembly of Parametric CAD Joints

Have you ever wondered how physical objects with hundreds of parts are assembled in CAD? It’s often a tedious process that JoinABLe automates by learning how a pair of parts connects to form joints. This work, done with peers at MIT, could be applicable to everything from furniture assembly to robotic assembly lines.

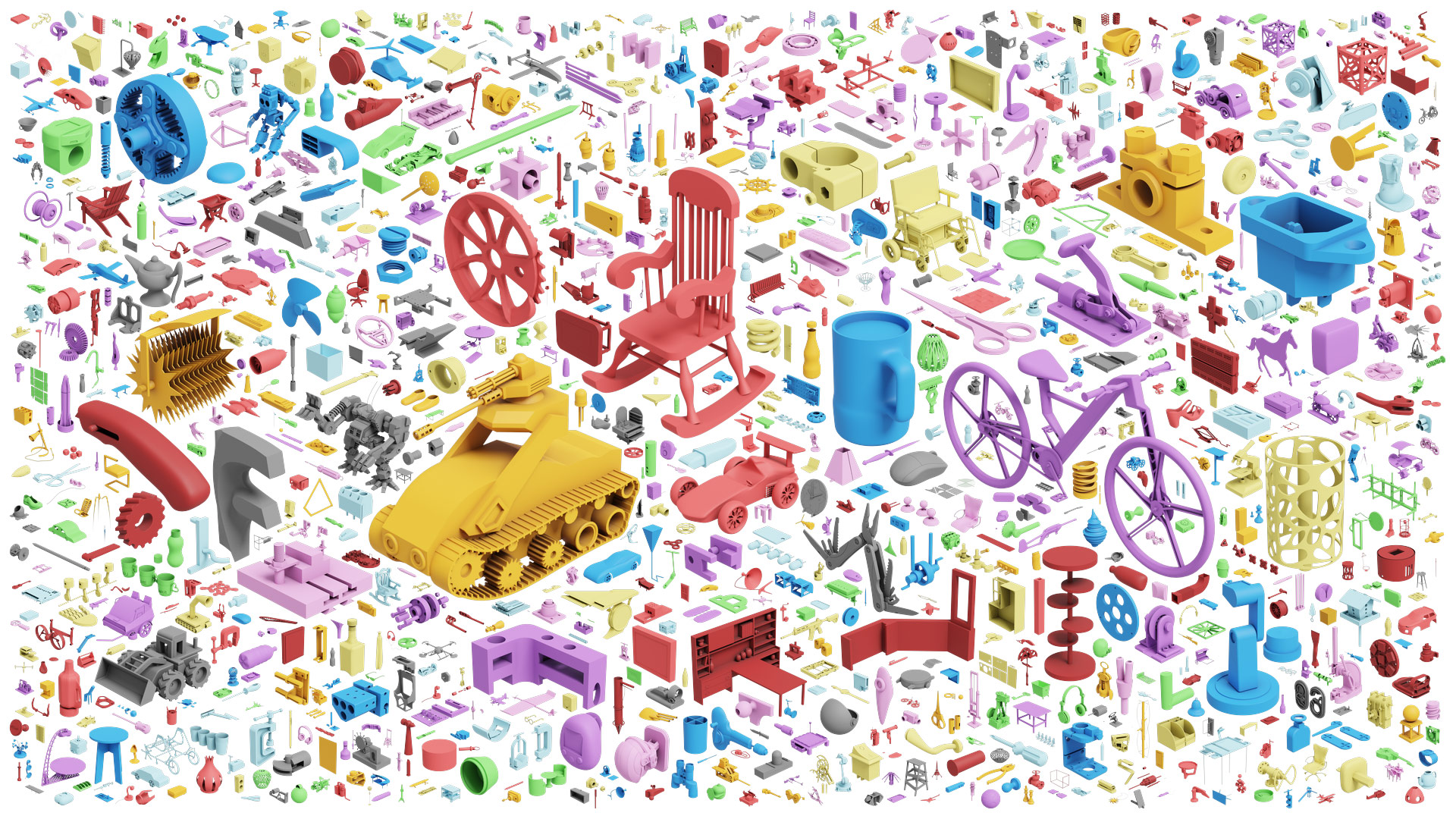

Fusion 360 Gallery Assembly Dataset

Together with the JoinABLe paper, the Autodesk AI Lab released the Fusion 360 Gallery Assembly Dataset, which offers the AI research community multi-part CAD assemblies with rich information on joints, contact surfaces, holes, and the underlying assembly graph structure. We’re committed to working with the wider researcher community to collaborate, duplicate and extend our work, and ultimately address wider research questions.

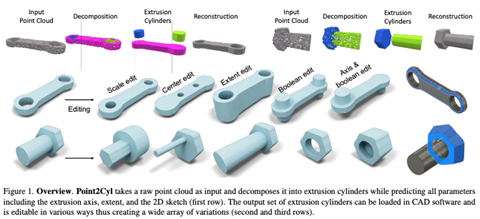

Point2Cyl: Reverse Engineering 3D Objects from Point Clouds to Extrusion Cylinders

Reverse engineering CAD models from raw geometry is a long-standing research challenge. Point2Cyl, in a joint effort with Stanford University and KAIST, approached this problem by decomposing point clouds into extrusion cylinders that are fully editable in CAD, and since nearly any object can be scanned and translated into a point cloud, this method is quite versatile.

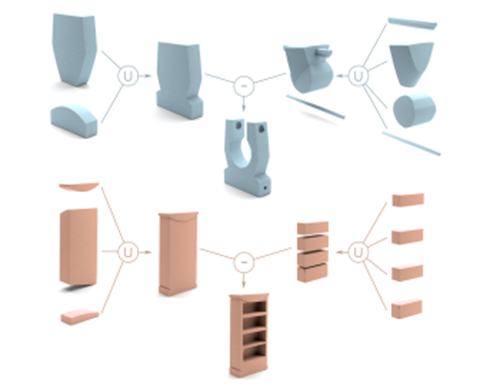

CAPRI-Net: Learning Compact CAD Shapes with Adaptive Primitive Assembly

For CAPRI-Net, we tackled a reverse engineering task in cooperation with Simon Fraser University by having the machine take a 3D object as input, decompose it into primitive shapes, and then output a CAD model. This enables the machine to learn a compact and interpretable implicit representation of a CAD model without supervision.

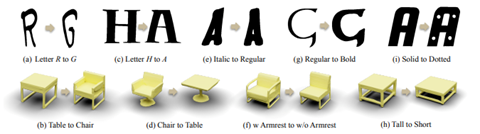

UNIST: Unpaired Neural Implicit Shape Translation Network

Style is an important aspect of design that also can be learned by AI and incorporated in the generation of 3D objects, and via UNIST, we explored transferring existing stylistic properties of a shape to new category of shapes, which can save designers a lot of time and unlocks several new design functions.

AI-powered design tools are essential to Autodesk’s future offerings, and the work being published by the AI Lab at top-tier AI conferences gives us a glimpse into the future of design. In addition to the above work showcased at CVPR this summer, the team also presented SkexGen: Autoregressive Generation of CAD Construction Sequences with Disentangled Codebooks at the International Conference on Machine Learning, as well as Translating a Visual LEGO Manual to a Machine-Executable Plan at the European Conference for Computer Vision. Up ahead, the team has five papers accepted at SIGGRAPH Asia and NeurIPS.

Learn more about Autodesk’s AI efforts here.